Top data integrations for operating production data in Retool

Nobody should be more excited about the rise of AppGen internal tool builders than data analysts and engineers. You're the ones tasked with turning your company's data into something useful—operationalizing it so it actually drives decisions and outcomes.

Traditionally, that's meant waiting for an engineering sprint to get anything built, watching roadmaps stretch out for months. But now, data teams can build custom tools themselves—tools that go beyond static dashboards and solve real problems.

This guide covers the integrations that make that possible. We'll walk through the platforms data teams are connecting to and what they're building with them.

Key integrations at a glance:

- Relational & NoSQL databases like PostgreSQL, MySQL, MongoDB, DynamoDB

- Data warehouses like Snowflake, BigQuery, Redshift, Databricks

- Infra & ttorage like Amazon S3, AWS Lambda, Datadog

- Communication tools like Slack, Microsoft Teams, Twilio

First, a note on integration security

Before we dive into specific integrations, let's talk about what actually matters when you're connecting to production data. Being able to connect your data is table stakes, but if you’re going to deploy these tools into production, you also need security, reliability, and the ability to move fast without breaking things.

Features to look for in an enterprise AppGen platform

When considering an enterprise-ready app generation tool, these are some of the features and capabilities to be looking for. Get these right and you can actually ship secure, production-ready tools without the usual engineering bottlenecks.

Security and access control

When you're building tools that touch production databases, you need role-based access control (RBAC) so you can restrict access by team and environment, single sign-on (SSO) for centralized authentication, and the ability to hide sensitive query parameters from audit logs. Retool handles this through permission groups, resource-level permissions, and environment separation. That means you can give your support team filtered access to customer data without handing over production database credentials.

Production reliability

Look for query caching to avoid redundant calls to expensive data warehouses, configurable timeouts for long-running queries, pagination for large result sets, and connection pooling for efficient database management. These are the features that keep your operational tools running smoothly under load.

Governance without losing speed

You need comprehensive audit logs that track every query execution, user action, and data access pattern for compliance. But governance shouldn't slow you down. Retool's centralized credential management, reusable Query Library, and approval workflows let you maintain control while still shipping tools quickly.

More than read-only access

You need tools that support write operations (INSERT, UPDATE, DELETE) with appropriate safeguards—approval gates for critical changes, environment separation between dev and prod, complete audit trails for compliance. This is what lets you build admin panels and incident response tools that actually solve problems instead of just displaying them.

Building with natural language, drag-and-drop, and code

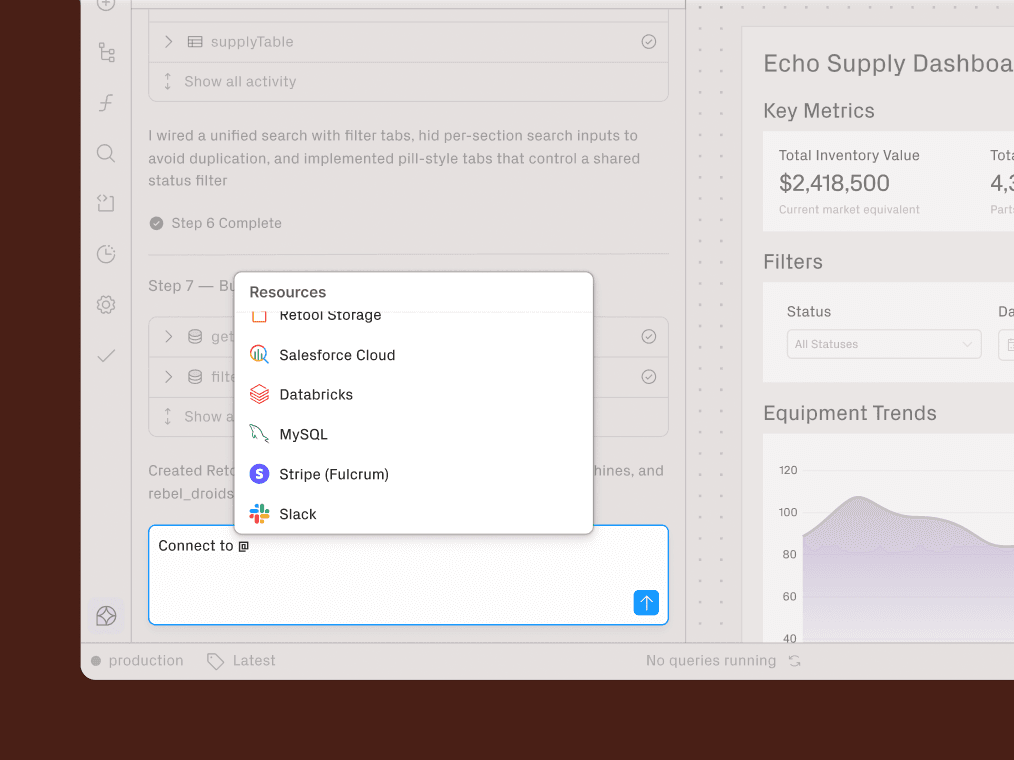

Retool can generate app scaffolding from prompts like "Create a revenue dashboard using my @Stripe data" but you're never locked into prompting. SQL, Python, and JavaScript remain available for complex logic. You get to leverage your existing data engineering expertise while accelerating initial development.

Multi-integration workflows

Data teams work across dozens of systems simultaneously. An incident response workflow might query Snowflake for affected records, send alerts via Slack, trigger remediation through Lambda, and log everything to Datadog—all from a single interface. Retool's 50+ pre-built integrations plus REST API support make this possible without maintaining separate tools for each data source.

Core data systems integrations

Making data actionable starts with reliable connections to your core data systems. Whether you're building pipeline monitoring tools, data quality dashboards, or admin panels for business teams, direct access to production databases and warehouses enables you to create tools that don't just display information—they solve problems through write operations, approval workflows, and real-time updates.

Relational databases

Retool connects to both relational and NoSQL databases, giving you a unified interface for building tools across your entire data stack.

Retool supports all major relational database platforms:

NoSQL databases

Retool supports major document stores and key-value databases, including:

Data warehouses and analytics engines

Data warehouses are where most analytical work happens. Retool integrates with all major data warehouse and analytics platforms:

What teams are building

When building on top of their production databases and warehouses, data teams are able to use Retool to build things like:

- Customer support admin panels. Connect to your production database (Postgres, MongoDB) and CRM (Salesforce, Stripe) to build a unified customer lookup tool. Support teams can search by email or account ID, see purchase history and account details across systems, and update records or issue refunds—all without SQL access.

- Self-service reporting for business teams. Instead of fielding one-off data requests, build filtered views on top of Snowflake or BigQuery that let stakeholders answer their own questions. Marketing pulls campaign performance data, sales reviews pipeline metrics, finance generates their own reports—all through interfaces with built-in AI guardrails to prevent expensive queries or unauthorized data access.

- Approval workflows for sensitive operations. When operations teams need to update customer records, process refunds, or modify inventory, route those requests through approval gates. The requester submits through your Retool app, the appropriate manager reviews and approves in Slack, and only then does the UPDATE execute against production. Every step logs to your audit trail.

Infrastructure and pipeline operations integrations

Data pipelines don't exist in isolation. They connect to object storage for raw files, trigger cloud functions for processing, and feed metrics into observability platforms. Retool integrates with the infrastructure layer, letting you build tools that interact with the full pipeline rather than just the database endpoints.

Object storage and file-based workflows

Amazon S3, Google Cloud Storage, and Retool Storage integrations let you build file management interfaces without writing upload and download logic from scratch.

Serverless and event-driven processing

Retool integrates directly with AWS Lambda, letting you trigger serverless functions from internal tools. This is useful when you need to run processing logic that doesn't belong with the frontend data transformations.

Observability, monitoring, and incident response

Retool integrates with Datadog for performance monitoring and error reporting, streaming spans and metrics so you can build dashboards that correlate observability data with app performance.

What teams are building

- File upload interfaces for business teams. Sales needs to upload lead lists, marketing wants to import campaign data, operations has to submit inventory updates—all stored in S3 but none of them have AWS credentials. Build upload interfaces that handle the S3 connection, confirm success, and optionally trigger downstream processing via Lambda. Business teams get self-service data ingestion without needing technical access.

- Batch processing controls for non-technical users. Connect to S3 and Lambda to let business teams trigger data processing jobs themselves. Upload a file, validate it runs through a Lambda function, see results in a table. If validation fails, users fix issues and re-upload without waiting for the data team to troubleshoot.

- Pipeline monitoring dashboards. Pull job run times and failure rates from Datadog, combine with warehouse data showing row counts and freshness, and give your team a single view of pipeline health. When something breaks, they see both the infrastructure problem and the data impact, plus buttons to trigger reruns or escalate to on-call engineers.

Alerting and incident response integrations

When critical data issues occur—pipeline failures, data quality anomalies, or system outages—the right people need to know immediately. Manually checking dashboards or querying logs creates response delays that can cascade into larger problems. Retool's messaging and collaboration integrations automate alerts and notifications, ensuring your team can respond to incidents as they happen.

Slack and Teams for operational alerts

Retool integrates with Slack and Microsoft Teams, letting users send notifications directly from apps and workflows. Both use OAuth 2.0 authentication, so users authorize through their existing accounts.

Email and SMS for critical failures

For more formal communication or alerts that need to reach people outside of Slack or Microsoft Teams, Retool supports SendGrid, Simple Mail Transfer Protocol (SMTP), Twilio, and OneSignal integrations.

What teams are building

- Automated stakeholder notifications. Set up scheduled workflows that run after key data processes complete. Query your warehouse to check if the weekly sales report is ready, then automatically send a Slack message to the sales team with a link to the updated dashboard. Or send email notifications to external partners when their data delivery finishes.

- Approval workflows via messaging. When business teams need to make sensitive changes—bulk customer updates, promotional pricing adjustments, inventory corrections—they submit requests through your Retool app, and notifications go to the appropriate approver in Slack or Teams. Approvers review and respond directly in their chat tool, execution only happens after approval, and everything logs to your audit trail.

- Incident alerts with context. When your nightly ETL detects issues—missing data, unexpected row counts, quality problems—automatically post to your team's Slack channel with error details and links to investigate. The alert includes what broke, what the expected state was, and a direct link to your Retool dashboard for troubleshooting.

AI and ML integrations

AI capabilities are becoming part of the data engineering toolkit. Data teams can use language models to summarize data quality issues, classify unstructured text, and build natural language interfaces for querying data. Retool provides integrations for both model providers and embedding storage.

Model providers for operational analysis and automation

Retool connects to AI model providers including OpenAI, Anthropic, and Google Gemini. You can also configure custom AI providers for self-hosted or alternative models. These integrations work through Retool AI queries, which let you call LLMs directly from your apps and workflows.

Vector storage and embeddings for contextual search & retrieval

Retool Vectors provides managed storage for text embeddings. You can generate embeddings using OpenAI embedding models like text-embedding-ada-002, store them in Retool Vectors, and query them for similarity search.

This enables retrieval-augmented generation (RAG) workflows for things like:

- Embedding documentation or code comments from data infrastructure

- Storing those embeddings in Retool Vectors

- Building a search tool that finds relevant documentation based on natural language queries

Retool can also integrate with existing Amazon Bedrock Knowledge Bases for similar capabilities if teams already use AWS for their AI infrastructure.

What teams are building

- Natural language query interfaces. Build search tools where business stakeholders describe what they need in plain English—"show me our top customers by revenue last quarter" or "which products have the highest return rates." The request goes to a language model that generates the SQL, executes it against your warehouse, and returns formatted results. Business teams get self-service analytics without learning SQL.

- AI-assisted analysis for non-technical users. Add language models to dashboards that explain what the data means. When sales reviews their pipeline, they can click "Explain this trend" and get human-readable analysis of why numbers moved. When operations sees anomalies in transaction data, the AI explains potential causes. This turns raw data into insights that don't require data team interpretation.

- Documentation search with embeddings. Store embeddings of your data dictionary, table schemas, and common analysis patterns in Retool Vectors. Business users search in natural language—"where's customer lifetime value stored" or "how do we calculate churn"—and get relevant documentation based on semantic similarity. They find answers without Slack messages to the data team.

It’s time to operationalize your production data

Getting value out of your production data requires more than visualization tools. Data teams need integrations that support write operations, enforce security controls, enable real-time workflows, and connect to every system in your data stack.

Retool's integration ecosystem gives data teams secure, reliable connections to 50+ platforms while maintaining the governance and audit capabilities that production data demands. Now, you can build incident response tools, admin panels for non-technical teams, or pipeline orchestration dashboards without needing to wait on engineering capacity.

Top Data Integrations FAQs

Through native integrations that handle connection pooling and query execution automatically. You create a resource by providing connection details, then:

- Write SQL queries directly in the app editor and bind results to components

- Browse databases, schemas, and tables in the schema explorer

- Query without managing connections or referencing external documentation

Through webhook triggers, scheduled jobs, and Apache Kafka integration for real-time execution, combined with SSO, RBAC, and audit logs for security:

- Real-time execution: Webhook triggers, scheduled jobs, and Kafka event processing with configurable timeouts

- Scalability: Workflows scale to hundreds of concurrent executions with error handling

Security: SSO for authentication, RBAC for authorization, and audit logs for tracking data access

OpenAI, Anthropic, Datadog, Slack, and Microsoft Teams are becoming essential for data teams:

- AI providers like OpenAI and Anthropic for building intelligent automation into data tools

- Observability platforms like Datadog and Sentry for monitoring pipeline health alongside application performance

- Collaboration tools like Slack and Microsoft Teams for alerting and incident response when data issues arise

The shift is toward integrations that help data teams act on information, not just display it.

Start with access control and audit logs, choose the right real-time architecture, and build in error handling from the start:

- Access control first: Define who can see what data and which actions they can take before building the interface.

- Audit logs from day one: Ensure you have visibility into how tools are used and what data is accessed.

- Right-size real-time requirements: Consider whether you need true streaming through Apache Kafka or whether polling on a schedule is sufficient.

Error handling and alerting: Build these into workflows so failures surface quickly rather than silently breaking downstream processes.

Through native integrations that handle connection pooling and query execution. Provide credentials in the Resources tab, then write SQL directly in the app editor and bind results to components. You get schema browsing, query caching to avoid redundant warehouse calls, and configurable timeouts for long-running jobs — all without managing connections manually. See integrations for Snowflake, BigQuery, and Databricks.

RBAC controls which teams access which databases and what they can do. SSO authenticates through your existing identity provider. Credentials are stored centrally — teams use them without ever seeing them. Every query logs to an audit trail. For regulated environments, self-hosting keeps data within your own infrastructure. See also: governance and AI guardrails.

The big shift is from read-only dashboards to operational tools that write back. Customer admin panels that update records. Self-service reporting interfaces that let business teams answer their own questions from Snowflake or BigQuery without filing data requests. Pipeline monitoring dashboards that correlate Datadog metrics with warehouse freshness. And AI search interfaces that let people query data in plain English. Read the full guide or watch: detecting financial anomalies with Databricks and Retool AI.

Yes. Retool integrates with OpenAI, Anthropic, Google Gemini, Amazon Bedrock, and custom providers. Pass database query results to an LLM for analysis, classification, or summarization — all within the same workflow. Retool Vectors adds managed embedding storage for semantic search and RAG use cases.

By combining monitoring data with messaging integrations in a single operational tool. Pull Datadog metrics and warehouse query results into one incident dashboard with action buttons for remediation. Set up workflows that automatically post to Slack with context — what broke, what was expected, a direct link to investigate — when pipeline jobs fail or data quality issues surface. Watch: designing automations with workflows and agents.